What a load of COBOLx

- Chris Skinner , Chairman at Financial Services Club

- 02.05.2017 10:00 am undisclosed , Chris Skinner is best known as an independent commentator on the financial markets through his blog, the Finanser.com, as author of the bestselling book Digital Bank, and Chair of the European networking forum the Financial Services Club. He has been voted one of the most influential people in banking by The Financial Brand (as well as one of the best blogs), a FinTech Titan (Next Bank), one of the Fintech Leaders you need to follow (City AM, Deluxe and Jax Finance), as well as one of the Top 40 most influential people in financial technology by the Wall Street Journal’s Financial News.

I was inspired to think more about the legacy challenge in the legacy economies when I saw this article by the inimitable Anna Irrera (she’s worth following if you’re on twitter). She was lamenting the state of US bank systems and how they’re hiring retired programmers just to keep the lights on. What really struck me were the charts.

That’s real COBOLx (pronounced COBOLicks).

Celent estimate that 80% or more of the $200 billion spent by banks on IT is maintaining legacy systems.

RBS, which paid a record fine to regulators for a big systems outage in 2012, hoped to solve its problems by replacing its core processing engine at a cost of £750 million but, in a recent interview, CEO Ross McEwan conceded there was still a big job to reduce the number of systems and applications at RBS from more than 3,000.

In a related comment Andrea Orcel, Global Head of UBS’s investment bank, says: “The challenge for most banks is that they are not technologists … As technology continues to evolve at a fast pace, becoming ever more critical to their business, they are having to navigate a space that is both highly complex, and does not play to their core competencies.”

Really? I know I’ve made this point often but banks are run by bankers when they really should be balanced with technologists, as they are FinTech firms.

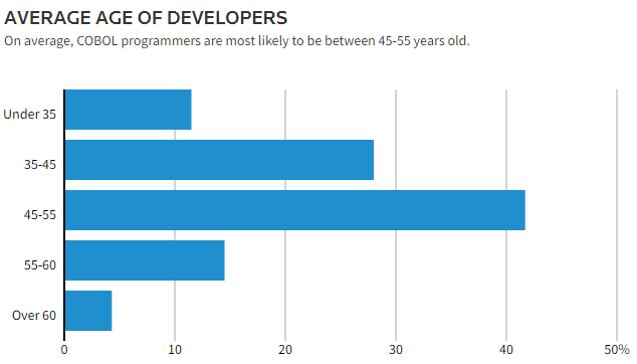

Anyways, this legacy thing is only going to get worse and worse if, for no other reason, that the guys who are maintaining the systems are dying of old age. This is not a new phenomena either. Back in 2012, Computerworld http://www.bankingtech.com/375941/cobol-bank-it/ conducted a survey and found that 46% of the IT professionals questions thought there was a rising COBOL programming shortage and 50% said the average age of their COBOL people are aged 45+.

So why are we still stuck with almost half the mainframe systems locked into COBOL? This is a question asked on Quora and I found the answers interesting.

The first is from Rogerio Nascimento who says (abridged version):

If you were to start a company today, 2017, what type of back and front office software would you select: I dare say most likely an ERP system, such as SAP. If you roll the tape back to the 60s or 70s, what would you choose? Banks have the most complex and old accounting systems in the market. That’s their core business, in the end: accounting. The top-technology for that in the 60s and 70s were to use mainframe systems and one of the available languages. Among those, the most human-like was COBOL. But more than that, COBOL could handle several of the requirements in a neat manner: reports, batch jobs, sequential files accessing, etc.

Banks invested several millions in designing mainframe systems architectures to respond to the business requirements. Whether that was a bank statement or an on-line money wiring transaction. And to achieve that they invested a lot of time and effort into as few as possible of the computer languages available as, if a large corporation invested in PL/I, for example, then they had to train their employees in that language and review all their billions of hardware configurations to best optimise that language. Then COBOL became a reality and became a liability. Moving from it meant to invest too much money in infrastructure and training and adding up the risk of failure on mission critical business functions.

The language then had several evolutions to keep up with the proprietary times and, along the way, became better and better. Whatever the binary code was back in the 60s it’s uncountably faster in the current days due to better hardware and software improvements.

Now, I ask the question again in a different way: if you had started a bank in the 60s, had a mission critical software at your hand, had it running smoothly and at a very low cost, why would you migrate it to a different language/environment?

I also liked the third answer from Marcus Abbah:

Stability and reliability.

I work in investment banking operations, and a lot of the front-end systems are java based. I once asked a senior IT manager why we still used back end technology from the 70s and 80s, and he remarked on the stability and reliability of COBOL. All the front-end systems experience some sort of down time or performance degradation. It puts some of our activities at risk, but the risk is not of the same magnitude as the back-end accounting infrastructure which runs on COBOL.

Banks, and especially global banks, move a lot of money around, and accounting for all that movement relies on a high degree of stability and reliability. There are very little tolerances for failures. Customers who need to withdraw money need the cast iron trust that anytime they want to get their money, they can. A bank that has a failure, and especially a sustained failure for say a period of an hour or so, can quickly lose its standing in the market. The reputational damage will potentially be unrecoverable.

IT budget runs into the hundreds of millions of dollars per annum, and so its not a question of investment – I am pretty sure the appropriate business case can be made, insofar the reliability and stability can be assured. Frankly, I do not see what solutions are presently available.

I can assure you that its not sunk cost, as far as the firm I work for is concerned.

I’m tempted to cut and paste all the answers but you should read them for yourself. All I will underline is that the 50 years of sunk cost in COBOL spaghetti is the biggest challenge for banks today as open source our strucures. The banks that are moving to an Enterprise Data Architecture that is cloud-based, rationalised and consolidated at the back-end will survive. The ones who believe they can stay on those stable and reliable COBOL systems will die. A point I make quite often.

This article originally appeared at: Finanser.com